Prompting Is Not Programming

On the Dangerous Comfort of the Blinking Cursor

A man sits at his desk, composing instructions for a machine he believes is listening.

He types carefully. He uses semicolons. He capitalizes “IMPORTANT” because he has been told the machine responds to emphasis, and he has no way to verify this but it feels true, which in 2025 is close enough. He formats his prompt the way a foreman formats a work order: with specificity, with sequence, with the quiet confidence of someone who expects compliance.

The machine responds in complete sentences. It thanks him for his clarity. It organizes its output with headers and numbered lists. It addresses his constraints in order. It sounds, for all the world, like a diligent junior associate who has read the brief and wants to impress.

He ships the output. It is wrong in ways he will not discover for three weeks.

This is not a failure of the machine. It is a failure of the metaphor.

We have been handed a new instrument and immediately mistaken it for an old one.

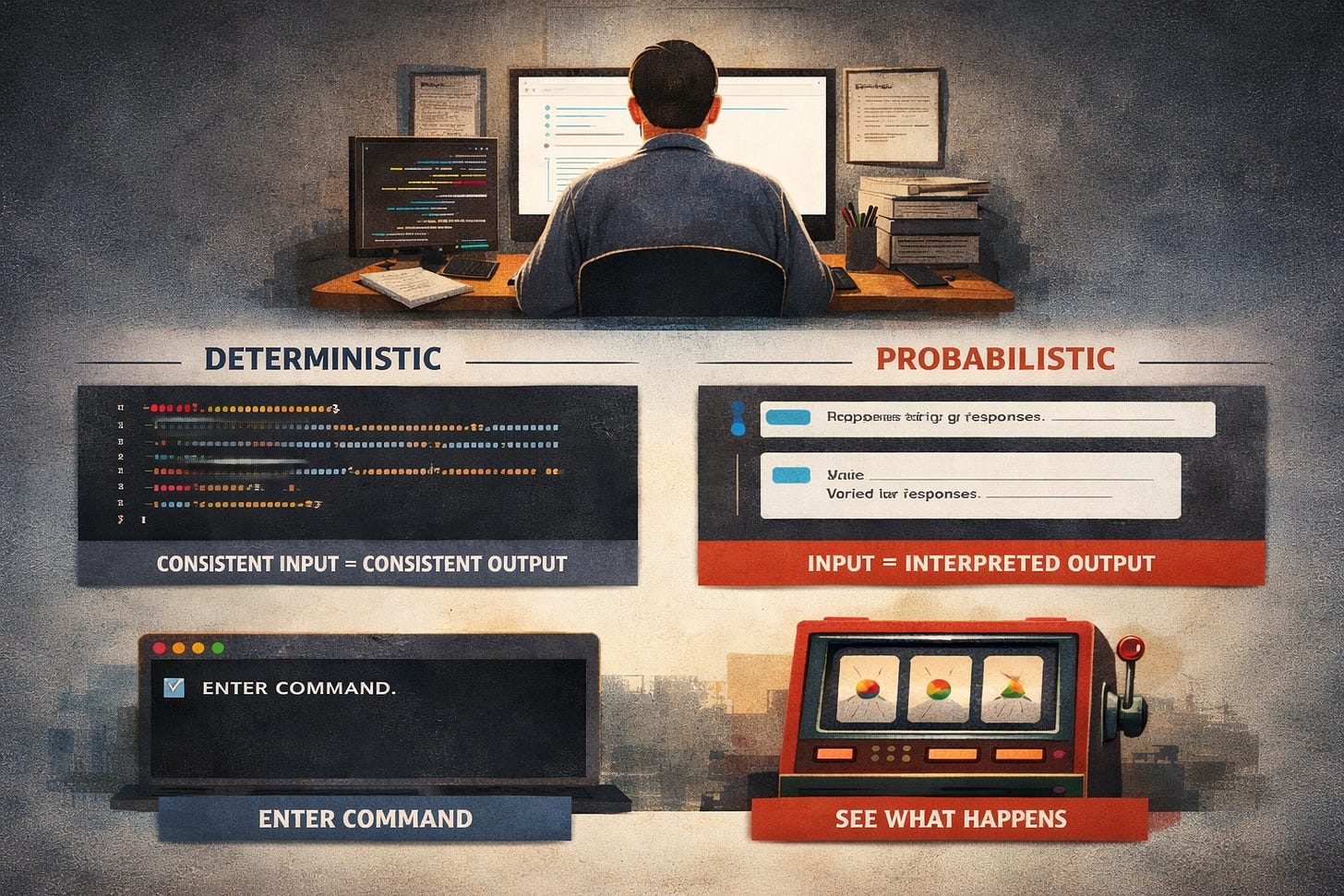

The interface is a text box. You type. It responds. The interaction resembles a command line so completely that the brain fills in the rest: determinism, repeatability, control. You said a thing. It did the thing. Therefore, saying the thing again will produce the thing again.

This is the most natural assumption in the world. It is also wrong.

Traditional programming operates on a contract so boring it barely qualifies as philosophy: given the same input and the same logic, the system produces the same output. Every time. Without variation. Without mood. Without the faintest inclination to improvise. That reliability is not a feature of the tool. It is the tool. Remove it and you do not have a buggy program. You have an expensive random number generator.

Prompting honors no such contract.

When you write a prompt, you are not defining a path through logic. You are dropping a suggestion into a probability field and watching what crystallizes. The model does not execute your instruction. It interprets it, the way a session musician interprets a chord chart: faithfully enough to be useful, loosely enough to surprise you, and occasionally in a key you never discussed.

The result may be consistent. It may also drift. And the drift will not announce itself, because from the model’s perspective, nothing has changed. It was never promising consistency. You were assuming it.

A prompt is not code. It is a suggestion with weight.

Consider how quickly the illusion fractures under pressure.

Reorder your instructions and the response shifts. Add an example and the tone recalibrates. Expand the context window and the model begins reinterpreting constraints it appeared to respect three paragraphs ago. What looks like a minor edit produces a disproportionate effect, not because the apparatus is fragile, but because you were never modifying logic. You were perturbing a field.

This is why prompts that “work” do not travel. Move one between models, or between versions of the same model, or sometimes between Tuesday and Wednesday, and its behavior changes. The surface looks identical. The terrain beneath it has shifted. You are standing on the same patch of ground and the geology is different.

The people who discover this early tend to be the ones building systems that depend on stability. They write a prompt. It performs. They integrate it. They ship it. And then, without warning or error message, the outputs begin to wander. Not dramatically. Not obviously. Just enough to be wrong in ways that require a human to catch, delivered with the unshakable poise of a machine that does not know it has drifted and would not care if it did.

The consultant who built a twelve-step client workflow on prompt reliability learns this when step seven starts contradicting step four. The marketing team learns it when the brand voice they “locked in” begins migrating toward a tone no one approved. The healthcare startup learns it when a triage summary omits a drug interaction that was flagged last Tuesday and silently absent this Thursday.

Everyone learns it. The question is the cost of the lesson.

This confusion is not new. Only the instrument is.

Every generation encounters a tool that resembles the one it replaced just closely enough to be misread. The automobile had a throttle and a brake, so people drove it like a fast horse and were surprised when it did not stop for water or sense the edge of a cliff. Early radio broadcasters read newspaper copy into microphones, as if the medium were print with better distribution, and it took a decade before anyone understood that the ear and the eye process authority differently. Television inherited radio’s formats wholesale, and for years the most expensive technology in American living rooms was used to broadcast people sitting at desks, talking.

The pattern is reliable: a new medium arrives wearing the costume of the old one, and the people who adopt it first are the ones most likely to mistake the costume for the thing. They bring their expectations forward. They assume the contract has not changed. And the medium, which owes them nothing, quietly operates on its own terms until the gap between expectation and behavior becomes impossible to ignore.

Prompting is the latest iteration. The text box looks like a command line. The output looks like a document. The interaction looks like delegation. And so we delegate, with all the confidence of someone who has been delegating to deterministic systems for forty years and sees no reason to update the model.

The reason is that the model has changed. We have not.

The deeper problem is not instability. It is the particular flavor of instability.

A traditional system fails loudly. It throws an error. It crashes. It returns a null value and refuses to proceed. The failure is ugly, but it is legible. You know something went wrong because the system told you, usually in red text, often with a stack trace, occasionally with the digital equivalent of a shrug.

A prompt-driven system does not fail like this.

It fails plausibly.

It produces an answer that looks correct enough to pass inspection, fills gaps with confidence, resolves ambiguity with invention, and never, under any circumstances, admits uncertainty unless you have specifically instructed it to, and sometimes not even then. It does not stop when it does not know. It keeps going. It generates. It completes. It delivers the wrong answer with the same formatting as the right one.

This is the most dangerous failure mode in modern computing: a system that is wrong at the speed of competence.

And the reason it is dangerous is not that the model is deceptive. It is that the interface is. The text box, the conversational tone, the structured output, all of it invites a trust calibration built for deterministic tools. You are extending the confidence you developed over decades of software into a medium that has not earned it and cannot honor it.

The seduction is understandable. Determinism is comforting. It means that if the demo works, the product works. It means that competence transfers, that the person who mastered SQL or Python or Excel can master this too, because mastery has always meant learning the rules and applying them precisely. The entire professional identity of the technical class is built on the premise that systems reward precision. That if you are careful enough, specific enough, rigorous enough, the machine will do what you mean.

Prompting does not punish imprecision. It accommodates it. It fills in what you left out. It smooths over contradictions. It resolves ambiguity not by asking but by choosing, silently, without annotation, in whatever direction the probability gradient slopes. And this accommodation, which feels like helpfulness, is the mechanism by which the model drifts from your intention without either of you noticing.

So replace the metaphor entirely.

Prompting is not programming. It is not even close to programming. It is closer to managing a gifted employee who has read everything ever written, remembers none of it with precision, and will never tell you when they are guessing.

You are not writing rules. You are shaping tendencies. You are narrowing the space of likely responses, not eliminating the unlikely ones. The word “professional” in your prompt does not map to a fixed operation. It maps to a cluster of patterns the model absorbed during training, weighted by context, inflected by everything else in the window, and subject to change the moment you add a sentence.

This is why examples outperform instructions. An example does not tell the model what to do. It shows it what “correct” looks like. The demonstration anchors output far more effectively than a list of requirements, because the machine is not parsing your intent. It is pattern-matching against your demonstration. The distinction sounds academic. It is operational. It is the difference between a blueprint and a suggestion taped to the refrigerator.

And this is why the people who prompt well tend to be the people who stopped thinking of it as programming the fastest. They do not expect repeatability. They design for variance. They build validation around the output, not trust into the input. They treat the model the way you treat a brilliant but unreliable source: verify everything, cite nothing on faith, and never assume that yesterday’s accuracy predicts tomorrow’s.

The temptation, of course, is to fix this by prompting harder.

More specificity. More constraints. More capital letters. More “IMPORTANT.” More formatting. More of the ritual behaviors that feel like control because they require effort, and effort, we have been trained to believe, correlates with results.

It does not. Not here.

You cannot engineer determinism into a probabilistic engine by typing more carefully. You can narrow the distribution. You can make the likely outputs more useful. But you cannot eliminate the variance, because the variance is not a defect. It is the mechanism. The engine works by predicting what comes next, and prediction, by definition, is not certainty. It is a bet. A very sophisticated, very well-informed bet, placed billions of times per second, with no awareness that it is betting.

The people who understand this build systems that assume the prompt is a soft layer and enforce structure elsewhere. They validate outputs. They check for consistency. They introduce friction where the stakes are high. They do not expect the model to be right. They expect it to be useful within constraints that they, not the model, define.

The people who do not understand this build systems that work in the demo and wander in production.

Programming was a discipline of elimination. You removed uncertainty until what remained was reliable. The system did what you said, no more, no less, and the silence between operations was the silence of obedience.

Prompting is a discipline of management. You are not eliminating uncertainty. You are living with it. You are building around a machine that generates with confidence and accuracy in roughly that order.

The blinking cursor looks the same as it always has. The contract behind it is entirely different. And the difference is not technical. It is temperamental. Programming rewarded people who believed in control. Prompting rewards people who have made peace with its absence.

The man at his desk is still typing carefully. He is still capitalizing “IMPORTANT.” He still believes that if he is precise enough, the system will do what he means.

It will do what it predicts. And prediction, no matter how sophisticated, is not obedience. It is a very fast, very fluent form of guessing by a system that does not know the difference and will never need to.

Thank you for your time today. Until next time, stay gruntled.

Don’t forget…I launched a memecoin.

Introducing $CEVICHE — the only memecoin marinated in onions and epistemology. 🎣

Do you like what you read but aren’t yet ready or able to get a paid subscription? Then consider a one-time tip at:

https://www.venmo.com/u/TheCogitatingCeviche

Ko-fi.com/thecogitatingceviche